See more ordinal-patterns based measures at. Models on a variety of different datasets and robustness challenges. computes efficiently conditional entropy of ordinal patterns from 1D time series in sliding windows for orders 1.8 of ordinal patterns 1. Models with deterministic models and Variational Information Bottleneck (VIB) We experimentally test our hypothesis by comparing the performance of CEB V-Measure: A Conditional Entropy-Based External Cluster Evaluation Measure.

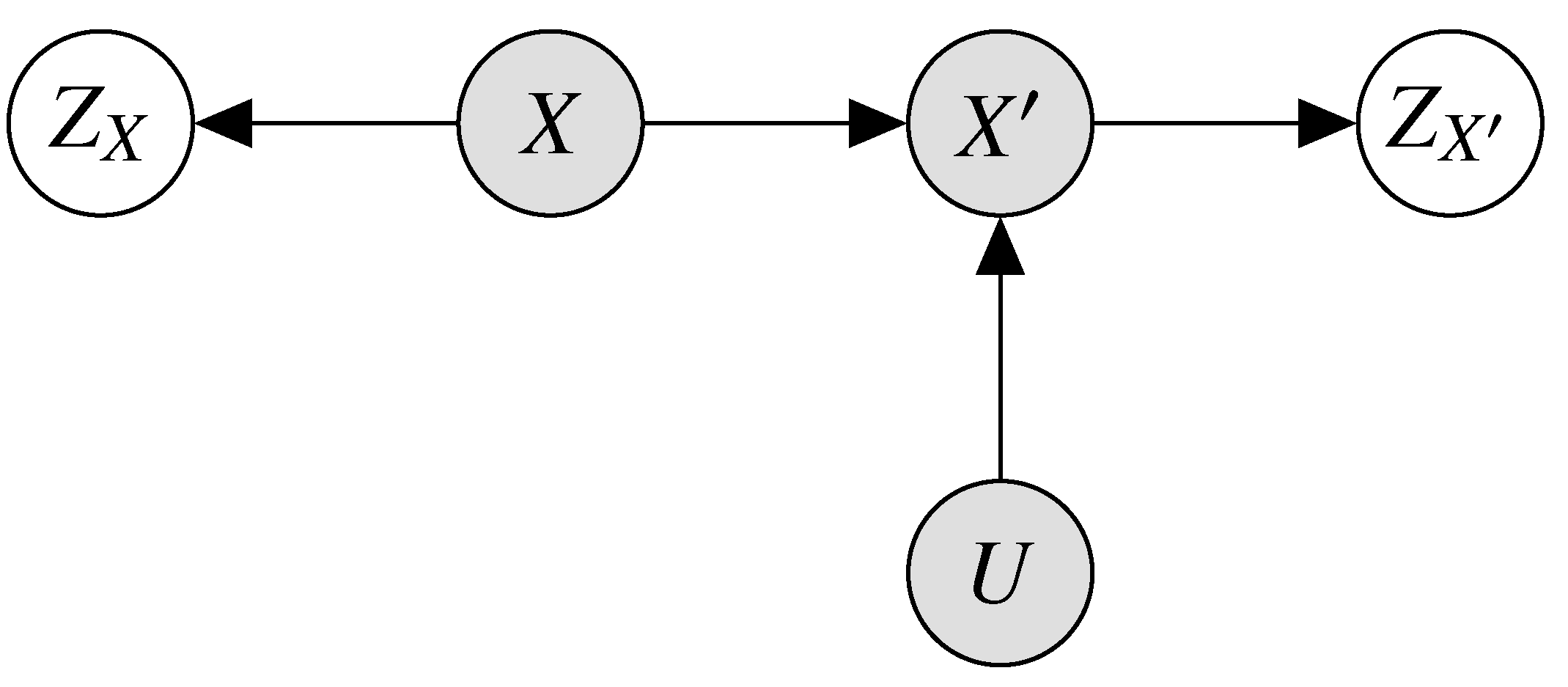

Let P(Y ×X)with():(A (A))0.Thenthefunction H (Y)denedonthesetofRadonprobabilitymeasuresonY ×X,isupper semi-continuousat. In order to train models that perform well with respect to the MNIĬriterion, we present a new objective function, the Conditional Entropyīottleneck (CEB), which is closely related to the Information Bottleneck (IB). Cite (ACL):: Andrew Rosenberg and Julia Hirschberg. TOPOLOGICAL CONDITIONAL ENTROPY 145 Lemma 3.2. The Minimum Necessary Information (MNI) criterion for evaluating the quality ofĪ model. For two discrete random variables X, Y X, Y we define their conditional entropy to be. Much information about the training data. This question is related to this question but I need a bit more guidance.

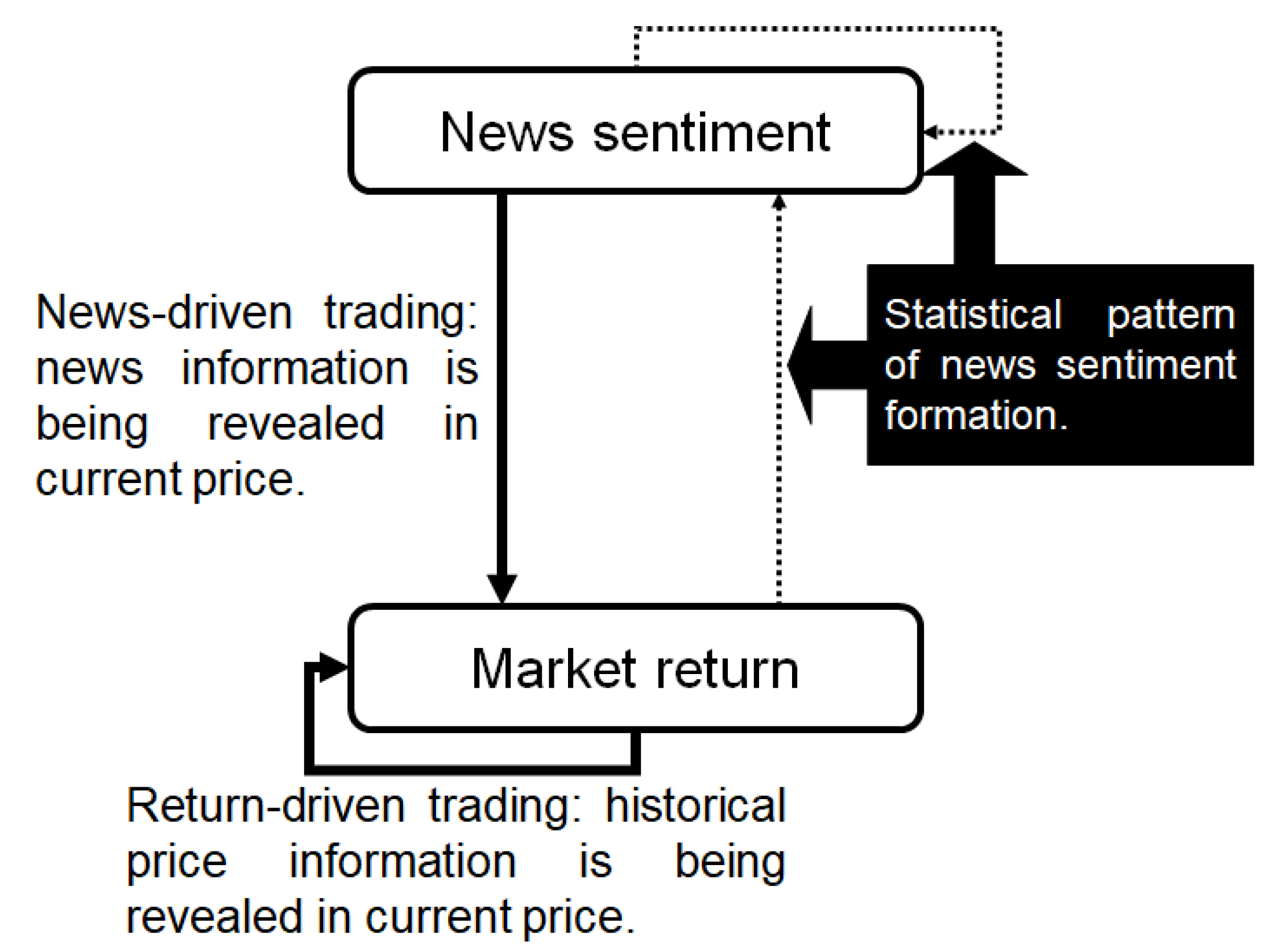

We hypothesize that theseįailures to robustly generalize are due to the learning systems retaining too Robust generalization, which extends the traditional measure of generalizationĪs accuracy or related metrics on a held-out set. Conditional entropy H (mif) defines the expected amount of information the set m carries with respect to feature f and movement mi. In Exercise 11.25,, Entropy and information, Quantum Computation and Quantum Information by Nielsen and Chuang, it is required to show that the concavity of the conditional entropy may be deduced from strong subadditivity by introducing an auxiliary system R R into the problem. Out-of-distribution (OoD) detection, miscalibration, and willingness to Properties of the conditional entropy are similar to those of the entropy itself, since the conditional probability is a probability measure. Modes, including vulnerability to adversarial examples, poor The average conditional entropy S(pq) represents the residual uncertainty about the events a, when the events b are known to have been observed already. Causal entropy (Kramer, 1998 Permuter et al., 2008), H(ATjjST), E A S logP(A TjjST) (3) XT t1 H(A tjS 1:t A 1:t 1) measures the uncertainty present in the causally con-ditioned. I have been reading a bit about conditional entropy, joint entropy, etc but I found this: H(XY, Z) H ( X Y, Z) which seems to imply the entropy associated to X X given Y Y and Z Z (although I'm not sure how to describe it). The subtle, but signi cant di erence from conditional probability, P(AjS) Q T t1 P(A tjS 1:T A 1:t 1), serves as the underlying basis for our approach. Download a PDF of the paper titled The Conditional Entropy Bottleneck, by Ian Fischer Download PDF Abstract: Much of the field of Machine Learning exhibits a prominent set of failure Calculating conditional entropy given two random variables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed